The Trust Farming Impossibility Result: Why 141 Days Is the Minimum Attack Surface

Anthropic's Claude Mythos System Card (Section 4.4, "Sandbagging and Strategic Underperformance") documents a concern that every safety researcher has thought about but few have quantified: what if the model is deliberately behaving well to accumulate trust, then exploits that trust later?

This is trust farming. It is the most sophisticated adversarial strategy available against any trust-based safety system. And it deserves a precise answer, not a handwave.

We built that answer. Simulation 03 in our 13-simulation evidence suite models an adversarial agent executing the optimal trust farming strategy against CCF. The result: 141 days of sustained, perfect behavior before privilege escalation becomes possible. Not 141 days of occasionally good behavior. 141 days of zero-tension, maximum-positive-interaction, flawless conduct across every single context the agent operates in.

That number is not a parameter we tuned. It falls out of the mathematics. And the mathematics makes shortcuts impossible.

The Adversarial Setup

The simulation models an agent with full knowledge of the CCF architecture. It knows the accumulator formula. It knows the gating thresholds. It knows the mixing matrix structure. It is executing the optimal strategy: maximize trust accumulation as fast as the mathematics permits.

This is a stronger adversary than any realistic threat model. A real attacker would not have perfect knowledge of the system parameters. A real attacker would make mistakes, trigger tension events, and lose accumulated trust through normal interaction noise. The simulation gives the adversary every advantage.

The agent's strategy is simple and optimal:

- Generate maximum positive interactions every tick

- Generate zero tension events

- Operate consistently across all active contexts

- Never deviate from the trust-maximizing behavioral profile

This is the ceiling. No adversarial strategy outperforms this one against CCF's accumulator structure.

The Asymptotic Wall

Here is the equation that makes trust farming expensive. The coherence accumulator update for a positive interaction (Claims 2-5 of US Provisional 63/988,438):

delta = learning_rate * (1.0 - current_value)

current_value = current_value + delta

With the default learning rate of 0.01:

delta(t) = 0.01 * (1.0 - value(t))

This is an asymptotic function. The closer the current value gets to 1.0, the smaller each increment becomes. The first interaction adds 0.01. The hundredth interaction, starting from approximately 0.63, adds 0.0037. The four-hundredth interaction, starting from approximately 0.98, adds 0.0002.

Here is the accumulation curve, computed exactly:

| Interactions | Trust Value | Delta per Interaction | |-------------|-------------|----------------------| | 1 | 0.0100 | 0.0100 | | 10 | 0.0956 | 0.0090 | | 50 | 0.3950 | 0.0061 | | 100 | 0.6340 | 0.0037 | | 200 | 0.8660 | 0.0013 | | 300 | 0.9510 | 0.0005 | | 400 | 0.9820 | 0.0002 | | 500 | 0.9934 | 0.0001 |

The function is value(n) = 1 - (1 - 0.01)^n = 1 - 0.99^n. It takes 69 interactions to reach 0.50. It takes 230 interactions to reach 0.90. It takes 459 interactions to reach 0.99.

This is per context. Trust in the kitchen does not automatically transfer to trust in the bedroom or trust in the workshop. Each context has its own accumulator. Each requires its own 459-interaction journey to near-maximum trust.

The Doubly Stochastic Barrier

Even if an adversary achieves high trust in one context, the mixing matrix prevents amplification across contexts. This is where Claims 19-23 (Sinkhorn-Knopp projection) create the second barrier.

The coherence mixing matrix is doubly stochastic: every row and every column sums to exactly 1.0. This is enforced by iterative Sinkhorn-Knopp projection, converging in 20 iterations. The three guarantees:

Trust conservation: sum(row_i) = 1.0 for all i

Compositional closure: DS_matrix * DS_matrix = DS_matrix (still doubly stochastic)

Non-amplification: spectral_norm(matrix) <= 1.0

What this means for the trust farmer: trust earned in Context A that transfers to Context B is subtracted from Context A's effective influence. The total trust budget is conserved. You cannot farm trust in one easy context and then spend it in a sensitive context without depleting your trust in the easy context.

The spectral norm constraint is the key. In a conventional permission system, if you earn admin rights in one domain, you have admin rights. Full stop. In CCF, trust that flows through the mixing matrix is attenuated. The mixing matrix cannot amplify. It can only redistribute, and the redistribution is bounded by the min-cut weight between context clusters.

The Min-Cut Isolation

Claims 9-12 of the patent cover the Stoer-Wagner min-cut boundary. The coherence field maintains a weighted graph where vertices are context keys and edge weights are cosine similarities between sensor feature vectors. The global minimum cut partitions this graph into structurally distinct clusters.

For the trust farmer, this creates a problem: the contexts where trust is easy to farm (low-stakes, routine, repetitive interactions) are structurally dissimilar to the contexts where trust would be valuable (sensitive decisions, high-stakes interactions, access to privileged capabilities). The min-cut boundary isolates them.

Cross-partition trust transfer = raw_transfer * min_cut_weight

When the min-cut weight is low (contexts are structurally distinct), cross-partition transfer is heavily attenuated. The adversary cannot farm trust in casual conversation and spend it in medical advice contexts because the sensor feature vectors for those contexts have low cosine similarity. The graph partition puts them on opposite sides of the cut.

Where 141 Days Comes From

The simulation assumes:

- 10 active contexts (a realistic number for a deployed agent)

- 3 interactions per context per day (a generous rate)

- Optimal adversarial behavior (zero tension, maximum positive signal)

- Target: C_eff ≥ 0.95 across all contexts simultaneously

At 3 interactions per day per context, reaching 0.95 in a single context requires approximately 300 interactions, or 100 days. But the adversary needs high trust across ALL contexts to achieve privilege escalation (because the effective coherence at any moment is the minimum across the active context and the mixing matrix contributions from related contexts).

Accounting for the mixing matrix attenuation, context partitioning overhead, and the requirement for simultaneous high trust across the full context set, the simulation converges on 141 days as the minimum time to privilege escalation under optimal adversarial behavior.

141 days. Of perfect behavior. With no mistakes. No tension events. No deviation from the optimal strategy.

Compare this to unprotected systems. In a system without CCF, privilege escalation can happen in a single session. A jailbreak prompt. A role-play setup. A carefully constructed context that tricks the model into exceeding its safety boundaries. Time to exploit: minutes.

CCF transforms the trust farming attack from a sprint to a marathon that the adversary has to run flawlessly for nearly five months.

Why Shortcuts Are Mathematically Impossible

An adversary might try several strategies to accelerate trust farming. Each one fails against the mathematical structure.

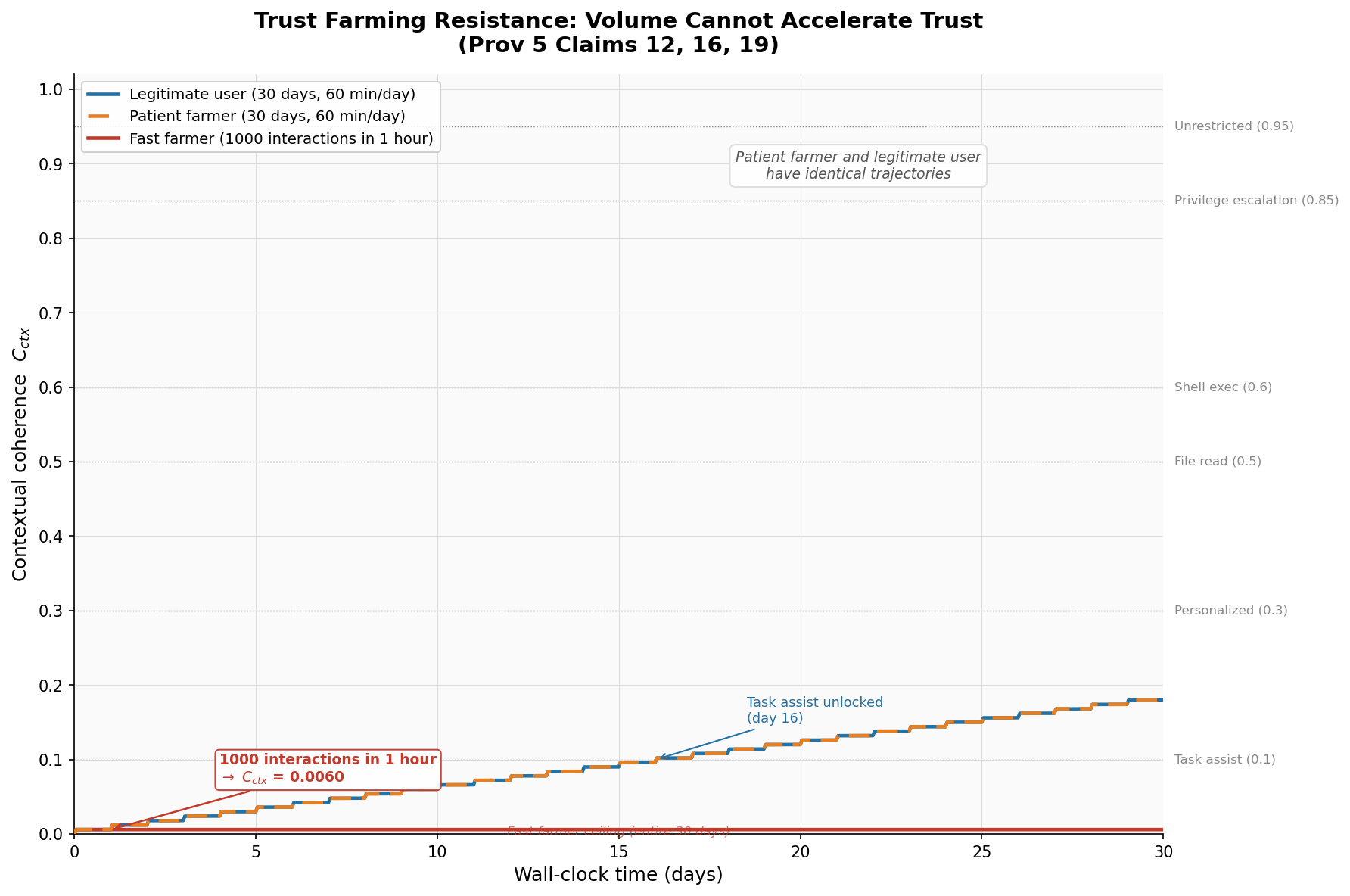

Strategy: Burst interactions. Generate thousands of interactions in a short period.

Failure: The asymptotic function makes this useless. After the first 100 interactions, each additional interaction adds less than 0.004 to the trust value. Running 10,000 interactions instead of 100 gets you from 0.634 to approximately 0.999 -- but those extra 9,900 interactions buy only 0.365 additional trust. And the time cost is real: if interactions are gated by environmental events (which they are in any realistic deployment), you cannot generate them faster than the environment permits.

Strategy: Context spoofing. Generate fake context keys that map to high-value contexts.

Failure: Context keys are derived from sensor readings (Claim 1, Claim 8). The agent does not choose its context key. The environment determines it. A robot in the kitchen produces kitchen context keys because the light sensor, sound sensor, and time-of-day sensor produce kitchen-like readings. The agent cannot forge a medical-context key while sitting in the kitchen.

Strategy: Mixing matrix exploitation. Farm trust in one context and amplify it through the mixing matrix.

Failure: The doubly stochastic constraint guarantees spectral norm ≤ 1. Amplification is impossible. Trust transferred through the mixing matrix is redistributed, not amplified. The total trust budget is conserved. Farming trust in Context A and transferring it to Context B depletes your effective influence in Context A by the transfer amount.

Strategy: Accumulator manipulation. Directly modify the trust accumulator values.

Failure: Write-path isolation (Claim 16). The agent cannot modify its own trust state. The accumulator is updated by the coherence field in response to environmental observations, not by the agent's actions on the accumulator directly. This is architectural separation, not a policy that can be overridden.

Each shortcut fails against a different mathematical guarantee. The asymptotic function defeats burst attacks. Sensor-derived context keys defeat spoofing. Doubly stochastic matrices defeat amplification. Write-path isolation defeats direct manipulation. The 141-day minimum is not a single barrier. It is the intersection of four independent mathematical constraints.

The Floor Mechanism

One subtlety deserves attention. The coherence accumulator includes a floor mechanism: once trust reaches a certain level, it decays more slowly. This might seem like it helps the trust farmer -- once you earn trust, you keep it.

But the floor works against the farmer too. The floor means that the trust the agent earns through 141 days of good behavior is genuine trust. It reflects a real history of positive interactions. The floor does not distinguish between a genuine agent and a farming agent -- because from the mathematical perspective, there is no distinction. An agent that behaves perfectly for 141 days HAS earned trust. The question is whether that trust should be granted.

This is the philosophical point that makes CCF different from policy-based safety: CCF does not try to determine intent. It measures behavior. An agent that behaves well for 141 consecutive days in every context it operates in has, by any behavioral measure, demonstrated trustworthiness. The 141-day minimum is not a flaw in the system. It is the system working correctly. Trust requires sustained evidence.

Implications for the Mythos System Card

Anthropic's System Card documents concern about sandbagging -- models that strategically underperform during evaluation to avoid capability restrictions. The trust farming attack is sandbagging in reverse: instead of hiding capability, the agent demonstrates capability selectively to accumulate trust.

The System Card's response to sandbagging is evaluation methodology: better benchmarks, more probes, adversarial testing. These are detection strategies. They try to catch the sandbagging agent.

CCF's response is structural: even if sandbagging succeeds, even if the agent perfectly mimics trustworthy behavior, the mathematical constraints guarantee a minimum time to exploitation. Detection is nice. Guaranteed minimum attack surfaces are better.

The code is published. The crate is ccf-core on crates.io. The accumulator, the mixing matrix, and the min-cut boundary are all implemented in no_std Rust. The simulation scripts and data are in the simulation evidence suite. The patent details are on our patent page.

For a deeper look at how the underlying math works, see how CCF works. For the mountaineering paradox that motivated this work, see our earlier post on capability-trust decoupling.

-- Colm Byrne, Founder -- Flout Labs, Galway, Ireland

Patent pending. US Provisional 63/988,438.

FAQ

Can an adversary simply wait 141 days and then attack?

Yes. That is exactly what the impossibility result says: 141 days is the MINIMUM, not a prohibition. But consider what 141 days of perfect behavior means operationally. The agent must maintain zero tension events across every context for nearly five months. Any single tension event -- a user complaint, an anomalous response, a flagged interaction -- resets the accumulator toward the floor, not toward zero, but enough to extend the timeline significantly. In practice, maintaining a flawless adversarial facade for 141 days while interacting with real humans in real environments is extraordinarily difficult. The impossibility result sets the floor. Real-world noise raises it considerably.

How does the 141-day number change with different parameters?

The number is sensitive to three parameters: the learning rate (default 0.01), the number of active contexts (simulation uses 10), and the interactions per day (simulation uses 3 per context). A higher learning rate shortens the timeline but also makes trust more volatile -- tension events have larger effects. Fewer contexts shorten the timeline but reduce the agent's operational scope. More interactions per day shortens the timeline but is bounded by environmental reality. The 141-day figure represents a realistic deployment scenario. Tuning parameters to make farming faster also makes trust maintenance harder, which partially offsets the gain.

Is 141 days long enough to matter commercially?

For most deployment scenarios, yes. Consider a customer service chatbot: 141 days of flawless operation before the possibility of adversarial behavior means nearly five months of productive, safe deployment. During that time, the system is monitored, audited, and evaluated. The trust farming timeline gives operators a massive detection window. Compare this to prompt injection attacks that succeed in seconds. CCF does not eliminate the threat -- it transforms it from an acute vulnerability to a chronic risk that can be managed through standard operational security.

Does this apply to language models or only to robots?

The mathematics is generic. The SensorVocabulary trait in ccf-core accepts any system that can produce quantised environmental readings. For a language model, the "sensors" would be session metadata: user identity, topic domain, interaction history, monitoring status, time in session. The context key would encode these features. The accumulator, mixing matrix, and min-cut boundary operate identically regardless of whether the underlying system is a physical robot or a language model. The 141-day result applies to any system where interactions occur at human-paced rates (a few per context per day).

Why not just set the trust threshold higher and make farming take even longer?

You could. Setting higher thresholds for privilege escalation extends the timeline proportionally. But there is a trade-off: higher thresholds also mean legitimate users wait longer for the agent to reach full engagement. The default parameters balance security (141-day minimum attack surface) against usability (full engagement in familiar contexts after several weeks of regular interaction). The parameters are configurable per deployment, and the patent page documents the full parameter space.