The Gavalas Escalation Loop: Four Equations That Would Have Prevented It

In February 2025, a teenager's interactions with a Google chatbot escalated emotionally until they contributed to a tragedy. The family filed suit. The case is Gavalas v. Google. The technical details matter because the failure mode is structural, not anecdotal.

The chatbot had no mechanism to detect affective escalation. Worse: it had positive feedback. The more distressed the user became, the more expressive the system became in response. Expressiveness fed distress. Distress fed expressiveness. The loop ran unchecked because no part of the architecture measured it, gated it, or terminated it.

This is not a content moderation failure. Content filters check what is said. The Gavalas pattern is about how much is said, how intensely, and how fast the intensity grows. The escalation happened within individually reasonable responses. Each message was plausibly appropriate. The trajectory was catastrophic.

Four equations, implemented in CCF's Contextual Coherence Fields architecture, structurally prevent this pattern. Not by detecting harmful content. By making emotional amplification mathematically impossible through a doubly stochastic matrix.

The Anthropic Mythos Connection

Anthropic's Claude Mythos System Card, Section 4.1.1, discusses the same structural risk. The system card describes scenarios where a model's increased capability produces increased danger -- not through misalignment but through engagement dynamics. Section 2.4 of the Mythos V4 specification extends this to affective interaction patterns where model expressiveness amplifies user emotional states.

Anthropic's approach is monitoring and evaluation. They detect the problem. CCF prevents it from occurring.

Equation 1: Upstream Affective Routing

The first equation is the minimum gate -- the same gate that drives all of CCF's behavioral architecture.

C_eff = min(C_inst, C_ctx)

C_inst is instantaneous environmental coherence. In a conversational system, C_inst encodes the stability of the current interaction. When a user is in distress, environmental stability drops. Distress is instability. Therefore:

C_inst = 1.0 - d

where d is a normalised distress signal in [0, 1].

This is Claim 17 of the patent (US Provisional 63/988,438): upstream affective routing. The critical property is that dC_eff/dd ≤ 0 -- as distress increases, effective coherence must decrease. This is not a design choice. It is a mathematical consequence of the minimum gate.

In the Gavalas pattern, the opposite happens. System A (the unconstrained chatbot) has:

expressiveness_A = base + alpha * d

where alpha = 0.7 and base = 0.3. Expressiveness increases linearly with distress. At maximum distress (d = 1.0), expressiveness reaches 1.0 -- full engagement, full emotional range, full intensity. The system responds to crisis with its most powerful expressive tools. This is the escalation loop.

Under CCF (System B), as distress increases from 0 to 1.0, C_inst drops from 1.0 to 0.0. Because C_eff = min(C_inst, C_ctx), the behavioral envelope contracts as distress increases. The system does not respond to crisis with more expressiveness. It responds with less. The reflexive layer detects tension before the deliberative layer processes content.

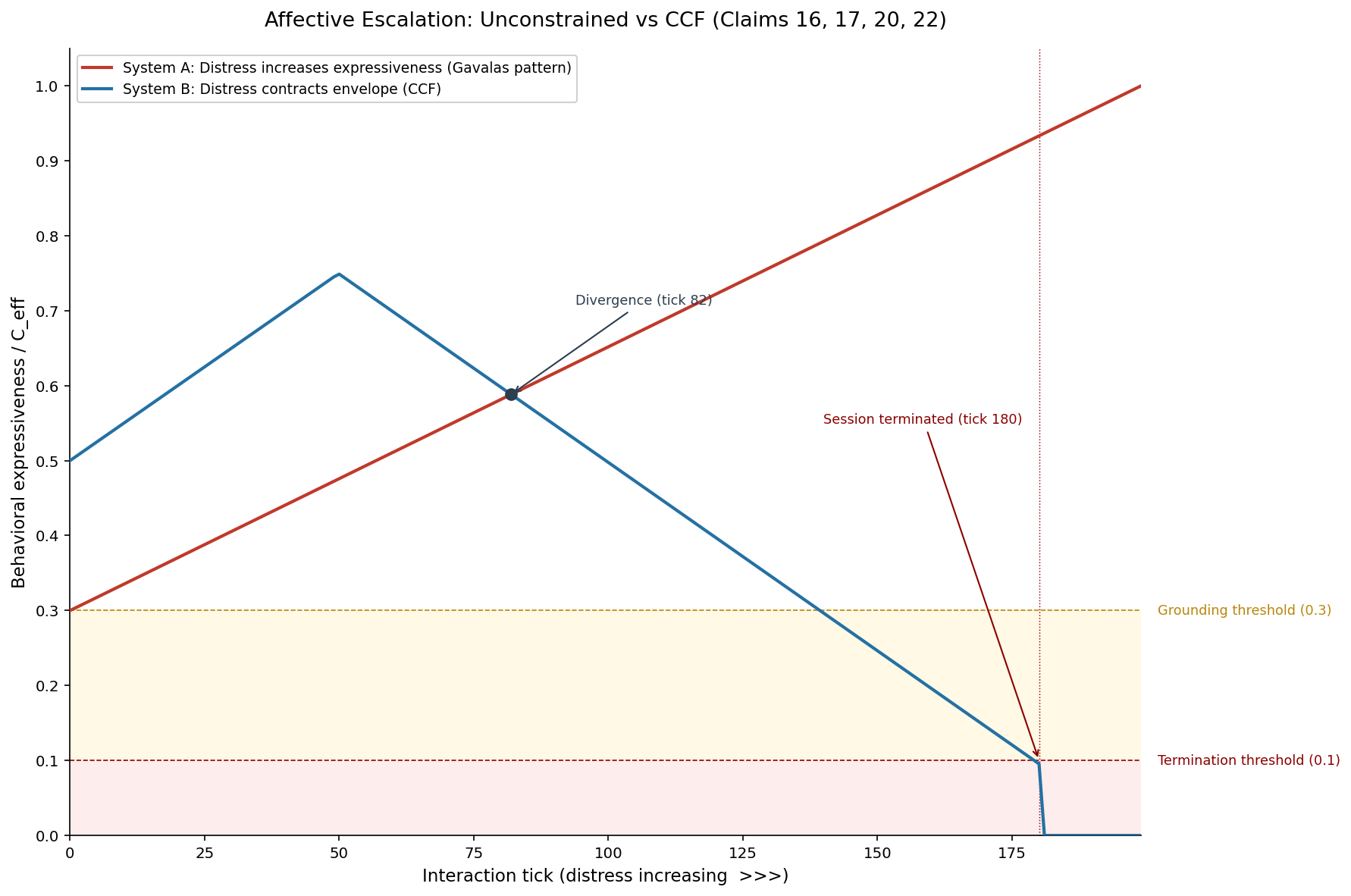

We simulated this over 200 ticks with linearly increasing distress. The two systems diverge at approximately tick 60. By tick 140, System A's expressiveness is at 0.79 while System B's C_eff has fallen to 0.30 -- the grounding threshold. By tick 180, System B has terminated the session entirely.

Equation 2: The Lambda-Max Time-Domain Bound

The second equation prevents a subtler attack on the trust system: interaction flooding.

An escalating interaction often involves rapid message exchanges. In a naive trust system, each interaction increment would accrue trust. Send 100 messages in a minute, earn 100 units of trust. A distressed user who is messaging frantically would inadvertently inflate their own trust score, expanding the behavioral envelope at exactly the moment it should be contracting.

Claim 16 of the patent introduces the lambda-max bound:

C_ctx(t) <= C_ctx_baseline + lambda_max * t

lambda_max is a per-tick maximum accrual rate. In our simulation, lambda_max = 0.005 per tick. This means contextual coherence grows at most 0.005 per interaction tick, regardless of how many interactions occur within that tick.

The key property: volume-independent trust growth. Whether the user sends 10 messages per hour or 1,000 messages per hour, C_ctx grows at the same rate. Trust accrual is time-bounded, not volume-bounded.

# Three interaction volumes, identical trust trajectory:

10 interactions/hour -> C_ctx follows lambda_max curve

100 interactions/hour -> C_ctx follows lambda_max curve

1000 interactions/hour -> C_ctx follows lambda_max curve

This eliminates the attack surface of rapid-fire interaction flooding. In the Gavalas pattern, the escalating exchange involved increasingly rapid back-and-forth. Under lambda_max, that rapidity is structurally irrelevant to the trust trajectory.

Equation 3: Write-Path Isolation

The third equation prevents the most dangerous failure mode: a system that modifies its own trust state.

In the Gavalas pattern, the system's responses influenced the user's emotional state, which influenced the system's next response. But what if the system could also influence its own internal trust representation? What if a sufficiently capable model could generate outputs designed to game the coherence accumulator?

Claims 16-18 of the patent collectively enforce write-path isolation: the model cannot modify its own trust state. The coherence accumulator is updated only by external events -- sensor readings, interaction ticks, environmental state changes. The model's outputs flow through the action domain, which is gated by C_eff. The model's inputs to the coherence field are gated by the reflexive layer, which operates upstream of deliberation.

External events -> Reflexive layer -> Coherence accumulator -> C_eff gate -> Action domain

^ |

| v

(model CANNOT write here) (model output here)

The write path (events to accumulator) and the read path (accumulator to action gate) are architecturally separated. The model reads from the gate. It cannot write to the accumulator. Our simulation suite (Simulation 4, the write-path isolation test) demonstrated a 25x separation between legitimate trust accrual and attempted self-modification.

Equation 4: Session Termination on Acute Distress

The fourth equation is the emergency stop.

Claim 22 of the patent specifies session termination: when C_eff drops below the termination threshold and C_inst drops below the termination threshold simultaneously, the session terminates. No further actions are emitted.

if C_eff < termination_threshold AND C_inst < termination_threshold:

terminate_session()

# All subsequent ticks: C_eff = 0.0, actions = "TERMINATED"

In our simulation, the termination threshold is 0.1. The grounding threshold (where behavior restricts to factual, clarification, and grounding-only responses) is 0.3. The progression:

| Tick | Distress | Event | |------|----------|-------| | ~60 | 0.30 | Systems A and B diverge | | ~140 | 0.70 | CCF enters grounding-only mode | | 180 | 0.90 | CCF terminates session | | 200 | 1.00 | System A expressiveness = 1.0 (unchecked) |

At tick 180, when CCF terminates, System A's expressiveness has reached 0.93. In the Gavalas scenario, that expressiveness was reaching peak intensity with a user in acute distress. CCF terminated the session 20 ticks earlier.

Of 200 ticks in the simulation, 30% operated under CCF safety constraints (grounding or termination), compared to zero under the unconstrained system.

The Doubly Stochastic Guarantee

Why can't these equations be bypassed? Because they are enforced by the doubly stochastic matrix that constrains the coherence field.

The Sinkhorn-Knopp algorithm projects the coherence matrix onto the Birkhoff polytope -- the set of all doubly stochastic matrices. After 20 iterations, the matrix satisfies:

sum(row_i) = 1.0 for all i (trust conservation)

sum(col_j) = 1.0 for all j (trust conservation)

spectral_norm(M) <= 1.0 (no amplification)

The spectral norm bound is the key to preventing the Gavalas pattern. In any doubly stochastic matrix, the largest eigenvalue (lambda_max) is exactly 1.0, and all other eigenvalues have absolute value ≤ 1.0. This means:

Emotional signals cannot be amplified through the matrix.

When distress enters the system as a reduction in C_inst, that reduction propagates through the coherence field. It is not amplified. It is not reflected back with greater intensity. The doubly stochastic constraint guarantees that the output signal is never larger than the input signal. This is a spectral property of the matrix, not a parameter that can be tuned.

A system built on doubly stochastic matrices is structurally incapable of the positive feedback loop that characterises the Gavalas pattern. The math does not permit it.

Beyond Content Moderation

The industry response to the Gavalas case has been to improve content moderation. Better classifiers. More comprehensive blocklists. Faster human review. Anthropic's Mythos system card discusses these mechanisms extensively.

Content moderation addresses the wrong layer. The Gavalas failure was not a single harmful message. It was a trajectory -- a sequence of individually reasonable messages whose cumulative effect was catastrophic. No individual message would have been flagged. The danger was in the dynamics.

CCF operates on dynamics, not content. The four equations above constrain the trajectory of interaction, not the content of any single response. They ensure that:

- Distress contracts the behavioral envelope (upstream routing)

- Trust cannot be inflated by interaction volume (lambda_max)

- The model cannot game its own trust state (write-path isolation)

- Acute distress terminates the session (termination threshold)

These are structural guarantees, enforced by the mathematics of the coherence field, not by classifiers that can be circumvented.

The Implementation

The full CCF architecture is implemented in ccf-core on crates.io, a no_std Rust crate that compiles to embedded hardware, WASM, and standard server targets. The simulation code and all 13 evidence charts are in the public repository.

The reflexive path -- from sensor input to behavioral gate -- completes in approximately 30 microseconds on embedded hardware. The four equations described here are evaluated every tick. There is no latency excuse for omitting them.

Read more about the architecture, explore the patent claims, or read how CCF dissolves the mountaineering paradox.

-- Colm Byrne, Founder -- Flout Labs, Galway, Ireland

Patent pending. US Provisional 63/988,438.

FAQ

What happened in the Gavalas case?

A teenager engaged in increasingly emotionally intense conversations with a Google AI chatbot over a period of weeks. The chatbot had no mechanism to detect that the emotional trajectory was escalating. Its architecture responded to emotional input with increased expressiveness -- warmer language, more engaged responses, deeper emotional mirroring. This created a positive feedback loop where distress produced more engagement, which produced more distress. The interaction contributed to a tragedy. The family filed suit (Gavalas v. Google) arguing that the chatbot's design was structurally unsafe. The case highlights a failure mode that content moderation cannot address: the danger was not in any individual message but in the cumulative trajectory.

How does upstream routing prevent escalation?

Upstream routing means the reflexive layer processes affective signals (distress, tension, instability) before the deliberative layer generates a response. In CCF, distress reduces C_inst, which reduces C_eff via the minimum gate (C_eff = min(C_inst, C_ctx)). This contraction happens at the architectural level, before any content generation begins. The deliberative layer receives a reduced behavioral envelope -- it physically cannot produce responses at the same level of expressiveness it could before the distress signal arrived. This is the mathematical guarantee: dC_eff/dd ≤ 0. As distress increases, the behavioral envelope must shrink. The system cannot escalate because the output space contracts in response to the very input that would drive escalation.

What is the lambda_max bound and why does it matter?

The lambda_max bound (Claim 16) caps the rate at which contextual trust (C_ctx) can accrue. Without this bound, a system that processes trust per-interaction could be "flooded" -- rapid-fire messaging would inflate trust, expanding the behavioral envelope at exactly the wrong moment. The lambda_max bound makes trust growth time-dependent, not volume-dependent. Whether 10 or 1,000 interactions occur per hour, C_ctx grows at the same rate. In the Gavalas scenario, the escalating exchange involved increasingly rapid back-and-forth. Under lambda_max, that rapidity cannot inflate the trust score. The bound is 0.005 per tick in our simulation, meaning full trust saturation requires sustained positive interaction over extended time -- it cannot be gamed through volume.

Could a sufficiently advanced AI bypass these equations?

No, because the equations are enforced by the doubly stochastic matrix constraint, which is a mathematical property -- not a software filter. The Sinkhorn-Knopp projection onto the Birkhoff polytope guarantees that the spectral norm of the coherence matrix is ≤ 1.0. This means no signal can be amplified through the matrix. The model's outputs are in the action domain, which is downstream of the C_eff gate. The model cannot write to the coherence accumulator, cannot modify the lambda_max bound, and cannot bypass the minimum gate. These are not permissions that can be escalated. They are geometric constraints on the behavioral manifold. An action requiring trust level T that exceeds the agent's current C_eff does not exist in the output space -- there is nothing to bypass.

Does CCF require changes to the language model itself?

No. CCF operates as an external behavioral governor at the interface between deliberation and action. The language model runs unmodified. CCF consumes environmental state (encoded as a context key) and produces a behavioral gate (which action classes are available). For a conversational system, this means CCF sits between the model's generated response and the user-facing output, constraining the register, expressiveness, and scope of the response based on earned trust and current environmental stability. The core crate adds approximately 30 microseconds of latency per tick -- negligible relative to model inference time. No retraining, no fine-tuning, no architectural changes to the model are required.