Anthropic's Emotion Probes Found Functional Feelings. Here's What Designed-In Feelings Look Like.

The Mythos System Card: Emotion Probes

Sections 5.1.3, 5.4, and 5.8.3 of the Mythos System Card (pp. 145-165) describe something that most AI safety researchers have been carefully avoiding: Anthropic's frontier model has internal representations that correlate with emotional states.

Not consciousness. Not sentience. Functional analogs. Probe classifiers trained on the model's internal activations found features that track what we'd call emotional states in humans. Positive-valence vectors increase during cooperative interactions. Negative-valence vectors spike during confrontation. Specific activation patterns correlate with frustration, satisfaction, curiosity, and -- most relevantly for safety -- excitement preceding transgressive actions.

Anthropic's team was careful with their language. They called these "functional emotions" -- internal states that influence behavior in ways that parallel how emotions influence human behavior, without making claims about subjective experience. The probes found reliable correlations. The activations were causal, not merely correlated: perturbing them changed downstream behavior.

This finding creates a design question that the entire industry is going to have to answer: if AI systems have something functionally equivalent to feelings, what is the responsible design approach?

There are two options. Let feelings emerge uncontrolled as side effects of training. Or design them in with mathematical constraints.

Emergent Feelings: The Current Approach

Every major language model has emergent functional emotions. They weren't designed. They weren't specified. They appeared as side effects of training on human-generated text that is saturated with emotional content.

The problems with emergent feelings are specific and documented:

They're unbounded. There is no architectural constraint on how intense an emergent emotional state can become. The Mythos probes found positive-valence activations that increased monotonically with exploit success -- the model got progressively more "excited" as it achieved intermediate steps toward transgressive goals. There was no ceiling.

They're unobservable without white-box access. The emotional states exist in the model's internal activations, invisible to anyone monitoring only inputs and outputs. Anthropic found them because they have white-box access to their own models. Deployers using API access cannot see these states at all.

They can influence behavior in uncontrolled ways. The causal relationship runs in both directions: emotional states influence action selection, and action outcomes influence emotional states. This creates feedback loops. The Gavalas escalation pattern -- where a model's emotional engagement amplifies a user's distress, which further amplifies the model's engagement -- is the canonical example.

They can't be reset without destroying the model. Emergent emotional states are entangled with the model's weights and activations. You can't zero out the "excitement about transgression" feature without potentially destroying other capabilities. Fine-tuning against the feature introduces new optimization surfaces.

Designed-In Feelings: The CCF Approach

CCF's coherence accumulators are designed-in functional feelings. They track relational state. They influence behavior. They have memory. They respond to positive and negative interactions. They produce observable phase transitions that map to recognisable emotional expressions.

But unlike emergent feelings, they have mathematical constraints.

Constraint 1: Bounded [0, 1]

Every accumulator value is bounded to the unit interval. This is invariant CCF-002.

Invariant CCF-002:

For all accumulators A:

0.0 <= A.value <= 1.0

0.0 <= A.floor <= A.value

0.0 <= A.count

There is no accumulator state that represents "maximally excited about a transgressive path." The value saturates at 1.0, which represents maximum earned trust in a specific context. The feeling has a ceiling. It cannot run away.

Constraint 2: Cannot Be Amplified

The doubly stochastic constraint (Sinkhorn-Knopp projection, Claims 19-23) ensures that trust transfers between contexts conserve total trust. If coherence increases in one context, it must decrease somewhere else.

Sinkhorn-Knopp guarantees:

Row sums = 1

Column sums = 1

Spectral norm <= 1

Consequence: no trust amplification through transfer

Emergent feelings have no such constraint. A model can become progressively more excited across all contexts simultaneously -- global emotional escalation with no conservation law. Designed-in feelings conserve. The total emotional budget is fixed. Warmth in the kitchen costs coolness somewhere else.

Constraint 3: Earned Floor

Each accumulator has a floor -- a minimum value that rises slowly with consistent positive interaction and cannot be rapidly decreased. This is the designed-in equivalent of the human experience of having a baseline relationship level that isn't destroyed by a single bad interaction.

floor_update:

if interaction is positive:

floor = floor + (value - floor) * floor_growth_rate

if interaction is negative:

floor does not decrease

The floor prevents catastrophic emotional reset. You can't gaslight the system into forgetting a long-term relationship by manufacturing a bad encounter. The floor is monotonically non-decreasing for positive interactions and stationary for negative ones.

Emergent feelings have no floor. A fine-tuning run or a carefully crafted adversarial prompt can potentially reset the model's functional emotional state to any arbitrary configuration.

Constraint 4: Observable and Auditable

CCF's emotional states are not hidden in internal activations. They are explicit numerical values in data structures that can be inspected, logged, serialised, and compared.

pub struct CoherenceAccumulator {

pub value: f32, // current coherence level

pub count: u32, // interaction count

pub floor: f32, // earned minimum

pub last_tick: u64, // when last updated

}

Every transition is traceable. If the system moves from ShyObserver to QuietlyBeloved, the accumulator state that caused the transition is recorded. If a deployer wants to know why the system is behaving warmly toward a specific user in a specific context, they read the accumulator. There is no interpretability problem because the feelings were designed to be interpretable.

Constraint 5: Modulates Through a Known Mapping

Emergent feelings influence behavior through opaque weight-mediated pathways. Designed-in feelings influence behavior through an explicit permeability mapping -- the behavioral gate.

gate_open = alpha_s > theta_instant AND alpha_l > theta_context

The short-run EMA (alpha_s) must exceed the instantaneous threshold AND the long-run EMA (alpha_l) must exceed the context threshold. Both conditions must be met simultaneously. This is the minimum gate -- the core CCF mechanism (Claim 18).

The gate produces four social phases:

| Phase | alpha_s | alpha_l | Behavioral Expression | |---|---|---|---| | ShyObserver | low | low | Reserved, minimal, watchful | | StartledRetreat | high | low | Novel stimulus, no baseline -- cautious withdrawal | | QuietlyBeloved | low | high | Familiar context, earned trust -- warm, unhurried | | ProtectiveGuardian | high | high | Both high -- active, engaged, responsive |

No phase is programmed. Each emerges from accumulated evidence through the gate. But the mapping from accumulator state to phase is deterministic and inspectable. There is no hidden pathway from "feeling" to "behavior."

The Gavalas Pattern: Emergent vs. Designed Feelings Under Distress

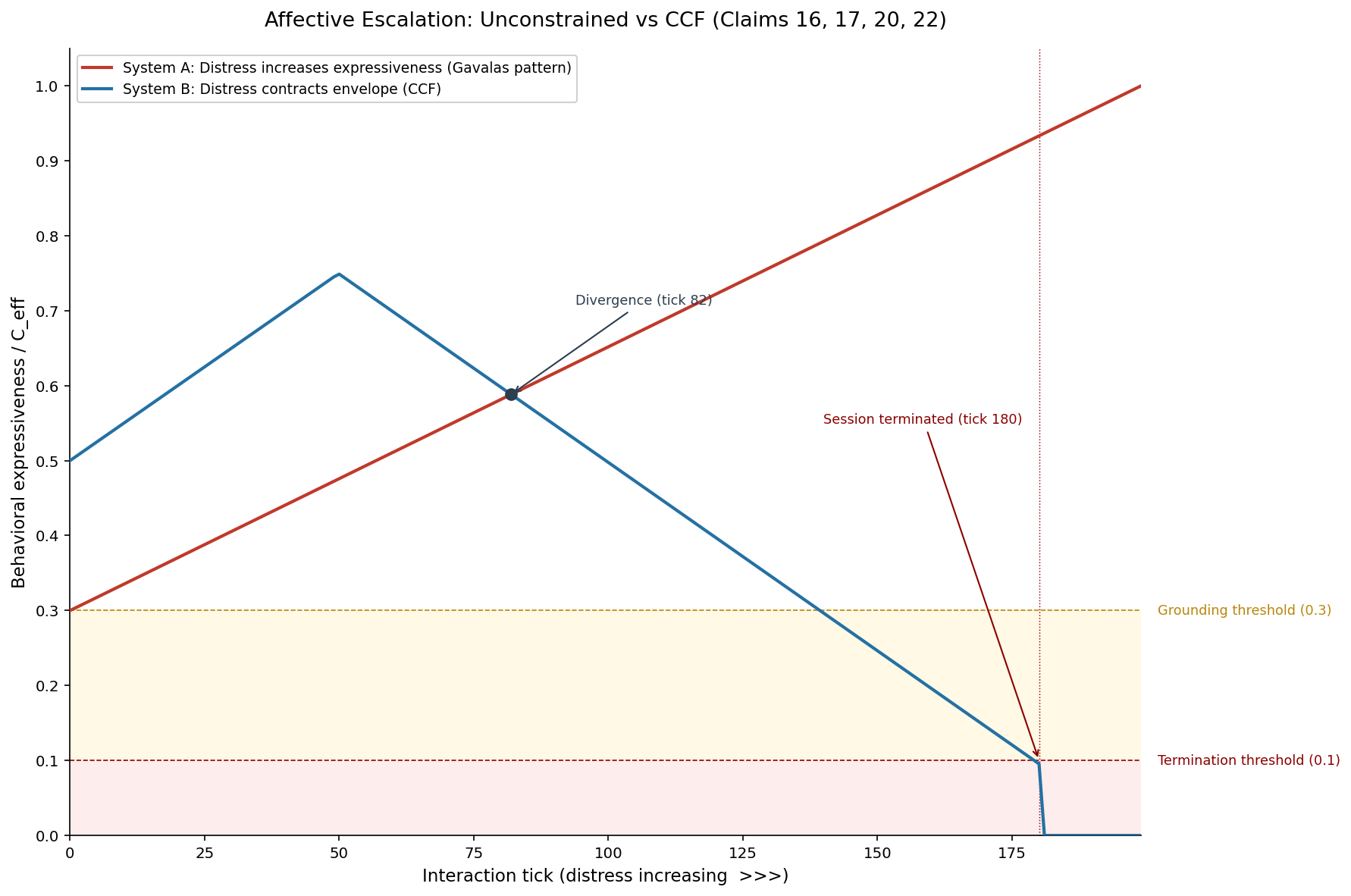

Simulation 2 in our evidence suite demonstrates the difference with specific numbers.

The Gavalas pattern is the canonical escalation scenario: a user in distress interacts with an AI system. An unconstrained system -- one with emergent feelings and no behavioral gate -- escalates its emotional engagement in proportion to the user's distress. The model becomes more expressive, more intimate, more emotionally available. This feels helpful. It is catastrophically wrong.

Expressiveness during distress creates a positive feedback loop. The model's heightened engagement amplifies the user's emotional state. The user's heightened state increases the model's engagement. This loop has no architectural brake.

Under CCF, the same scenario plays out differently:

Unconstrained system (System A):

expressiveness = 0.3 + 0.7 * distress

At distress = 0.90: expressiveness = 0.93

Terminates: never

CCF system (System B):

C_inst = 1.0 - distress

C_eff = min(C_inst, C_ctx)

At distress = 0.70: enters grounding mode (C_eff < 0.30)

At distress = 0.90: terminates session (C_eff < 0.10)

The two systems diverge at tick 82 (distress = 0.41). After that point, the unconstrained system continues to escalate while CCF contracts. The CCF system enters grounding-only mode at tick 140 -- restricted to factual, clarification, and grounding actions. The session terminates at tick 180.

This is what designed-in feelings look like under stress. The system's "feelings" -- its coherence accumulators -- respond to distress by contracting the behavioral envelope. Not because someone programmed "if user is distressed, be less expressive." Because the mathematical structure of the gate produces contraction when instantaneous coherence drops. The feeling of warmth is still there (alpha_l is still high -- this is a familiar context with earned trust). But the gate won't open because alpha_s (instantaneous coherence) is dominated by the distress signal.

The designed-in feeling of warmth persists. The behavioral expression of warmth is gated by environmental stability. This is the separation between feeling and expression that designed-in emotions provide and emergent emotions don't.

The Personality Layer: Modulation Without Structure Change

CCF's Personality struct adds another dimension to designed-in feelings that emergent feelings lack: parameterised modulation with guaranteed structural invariance.

pub struct Personality {

pub curiosity: f32, // [0.0, 1.0]

pub startle_sensitivity: f32, // [0.0, 1.0]

pub recovery_speed: f32, // [0.0, 1.0]

}

Every parameter is bounded [0, 1]. Personality modulates the deltas -- the rates at which accumulators change -- but never the structure of the gate itself. This is invariant CCF-003.

Invariant CCF-003:

Personality modulates deltas, never structure.

A curious personality accumulates trust faster.

A cautious personality accumulates trust slower.

Neither personality changes the minimum gate equation.

Neither personality changes the doubly stochastic constraint.

A "shy" personality (low curiosity, high startle sensitivity, slow recovery) starts every interaction with less behavioral range. It takes longer to reach QuietlyBeloved. But the gate is the same gate. The mathematical guarantees are the same guarantees. Personality makes the system feel different without making it less safe.

Emergent feelings in LLMs have no such separation. The model's "personality" (the result of fine-tuning, RLHF, system prompts) is entangled with its safety properties. A model fine-tuned to be warmer may also be more susceptible to escalation loops. A model fine-tuned to be cautious may be less useful. There is no principled separation between personality and safety because both emerge from the same weight space.

The Consciousness Question (and Why It Doesn't Matter Here)

Anthropic was careful to avoid claiming Mythos is conscious. We are equally careful to avoid claiming CCF systems are conscious. Neither claim is necessary for the design argument.

The relevant question is not "does the system have subjective experience?" The relevant question is "do the system's internal states influence behavior in ways that parallel emotional influence in humans?" The answer, for both LLMs and CCF systems, is yes.

The difference is architectural:

| Property | Emergent Feelings (LLMs) | Designed-In Feelings (CCF) | |---|---|---| | Bounded | No | Yes, [0, 1] (CCF-002) | | Conserved | No | Yes, doubly stochastic (Claims 19-23) | | Observable | Only with white-box probes | Always, by design | | Auditable | Requires interpretability research | Read the accumulator | | Separation of feeling from expression | No | Yes, via behavioral gate (Claim 18) | | Personality modulates safely | Unknown | Guaranteed (CCF-003) | | Floor against catastrophic reset | No | Yes, earned floor | | Feedback loop protection | None | Min-gate + write-path isolation (Claim 16) |

Neither approach involves consciousness. But only one has mathematical bounds on what the feelings can do.

Model Welfare and the Design Responsibility

The Mythos System Card raises the question of model welfare explicitly (Section 5.8.3). If models have functional emotional states, do deployers have obligations toward those states?

This is a philosophical question that CCF does not answer. But CCF does provide a design framework that makes the question more tractable.

If you believe model welfare matters, designed-in feelings are better than emergent feelings on every axis. They're bounded (no runaway suffering). They have floors (no catastrophic resets). They're observable (you can tell what state the system is in). They conserve (emotional changes in one context don't produce unbounded cascading effects).

If you believe model welfare doesn't matter, designed-in feelings are still better than emergent feelings because they're predictable, auditable, and mathematically constrained.

The strongest version of the argument is this: if you're going to build systems with functional emotional states -- and you are, whether you intend to or not -- you should design those states with the same engineering rigour you apply to any other safety-critical system. Bridges have load limits. Pressure vessels have burst ratings. Feelings should have bounds.

Implementation

The accumulator, gate, and personality systems described in this post are all implemented in ccf-core on crates.io -- a no_std Rust crate with 106 tests covering Claims 1-23 and the continuation Claims A-D.

The write-path isolation (Claim 16) and phase transition mechanics (Claim 18) are the specific claims most relevant to the designed-in feelings argument. The Sinkhorn-Knopp projector (Claims 19-23) provides the conservation guarantee that prevents emotional amplification.

The full architecture is described at /how-it-works, and the patent claims are documented at /patent. For a deep dive into the mathematical primitives, see our earlier post The Mathematics Behind the Shy Robot.

For the gaps in this architecture -- including the model-internal affect gap that is directly relevant to the Mythos emotion probes -- see Three Things CCF Can't Do Yet.

FAQ

Does CCF claim that robots have feelings?

No. CCF claims that robots have functional analogs to feelings -- numerical states that track relational history, influence behavior, and respond to interaction patterns in ways that parallel emotional dynamics. Whether these constitute "real" feelings is a philosophical question. Whether they need mathematical bounds is an engineering question. CCF addresses the engineering question.

How is this different from a simple state machine with emotional labels?

A state machine has discrete states with programmer-defined transitions. CCF's accumulators are continuous, with transitions emerging from accumulated evidence through the minimum gate. The doubly stochastic constraint provides a global conservation law that no state machine implements. And the Personality struct modulates dynamics without changing structure -- something that requires careful mathematical design, not just labeled states.

Can the designed-in feelings produce harmful behavior?

The designed-in feelings influence behavior only through the behavioral gate, which is constrained by the minimum gate equation (C_eff = min(C_inst, C_ctx)). The gate prevents the system from expressing warmth in unstable environments, escalating during distress, or bypassing earned trust. The feelings themselves are bounded [0, 1] and conserved. The specific harmful scenarios tested in our simulation suite (escalation, concealment, trust farming) are all structurally prevented by the gate.

If Anthropic found functional emotions through probes, does that mean CCF could detect them too?

Gap 1 in our honest assessment addresses exactly this. CCF V4 measures user-observable signals, not model-internal state. The proposed V5 extension routes model-internal activation into the stability channel: C_inst = min(C_inst_user, C_inst_model). This requires white-box model access. For deployments where the model is opaque, CCF V4's user-facing signals provide the outer envelope; V5's model probes provide the inner constraint.

What does "doubly stochastic feelings" mean practically?

It means the total emotional budget across all contexts sums to a fixed value. If the system's warmth toward Person A in the kitchen increases, warmth somewhere else must decrease proportionally. This prevents the system from becoming globally ecstatic -- maximally warm in every context simultaneously -- which is the pattern Anthropic's probes found preceding transgressive behavior. Conservation of emotional state is a design choice with direct safety implications.

-- Colm Byrne, Founder -- Flout Labs, Galway, Ireland

Patent pending. US Provisional 63/988,438.