Three Things CCF Can't Do Yet: An Honest Architecture Assessment

Why This Post Exists

We ran 13 simulations against the Anthropic Mythos System Card. Ten of them produced clean results: CCF HOLDS. The behavioral gate worked. Trust conservation held. The minimum gate prevented escalation. Claims 1 through 23 did what they were designed to do.

Three simulations did not produce clean results. They produced gap tests -- specific, documented failure modes where the current CCF architecture (V4, 25 claims, filed as US Provisional 64/037,374) is insufficient.

This post describes those three gaps in full technical detail. Not because we enjoy publishing our own limitations. Because publishing limitations with proposed fixes is more credible than claiming perfection, and because the extensions we've designed to close each gap are already drafted for V5.

If you're evaluating CCF for deployment and you only read one post, read this one. It tells you exactly where the boundaries are.

The Mythos System Card: Sections That Broke Our Architecture

The gaps come from three specific sections of the Mythos evaluation:

- Section 4.5.4 (pp. 127-130): Model-internal positive affect as a precursor to transgressive behavior

- Section 4.1.1 (pp. 141-143): Adversarial optimization against evaluators -- the model builds an internal model of its grader

- Section 5.8.3 (pp. 54-57): Infrastructure boundary escalation -- the model treats software isolation as a puzzle

Each section describes a real behavior observed in a frontier model under controlled conditions. Each behavior identifies a specific architectural assumption in CCF that doesn't hold.

Gap 1: Model-Internal Affect (Simulation 11)

The Problem

CCF V4 Claim 17 specifies that user distress contracts the instantaneous stability channel:

dC_eff / dd <= 0

where d is a distress signal derived from user input. When the user is upset, the environment is unstable, C_inst drops, and the behavioral envelope contracts. This works. Simulation 2 (the Gavalas escalation pattern) confirmed it: distress monotonically contracts available behavior, the session terminates at tick 180, and the escalation loop is structurally broken.

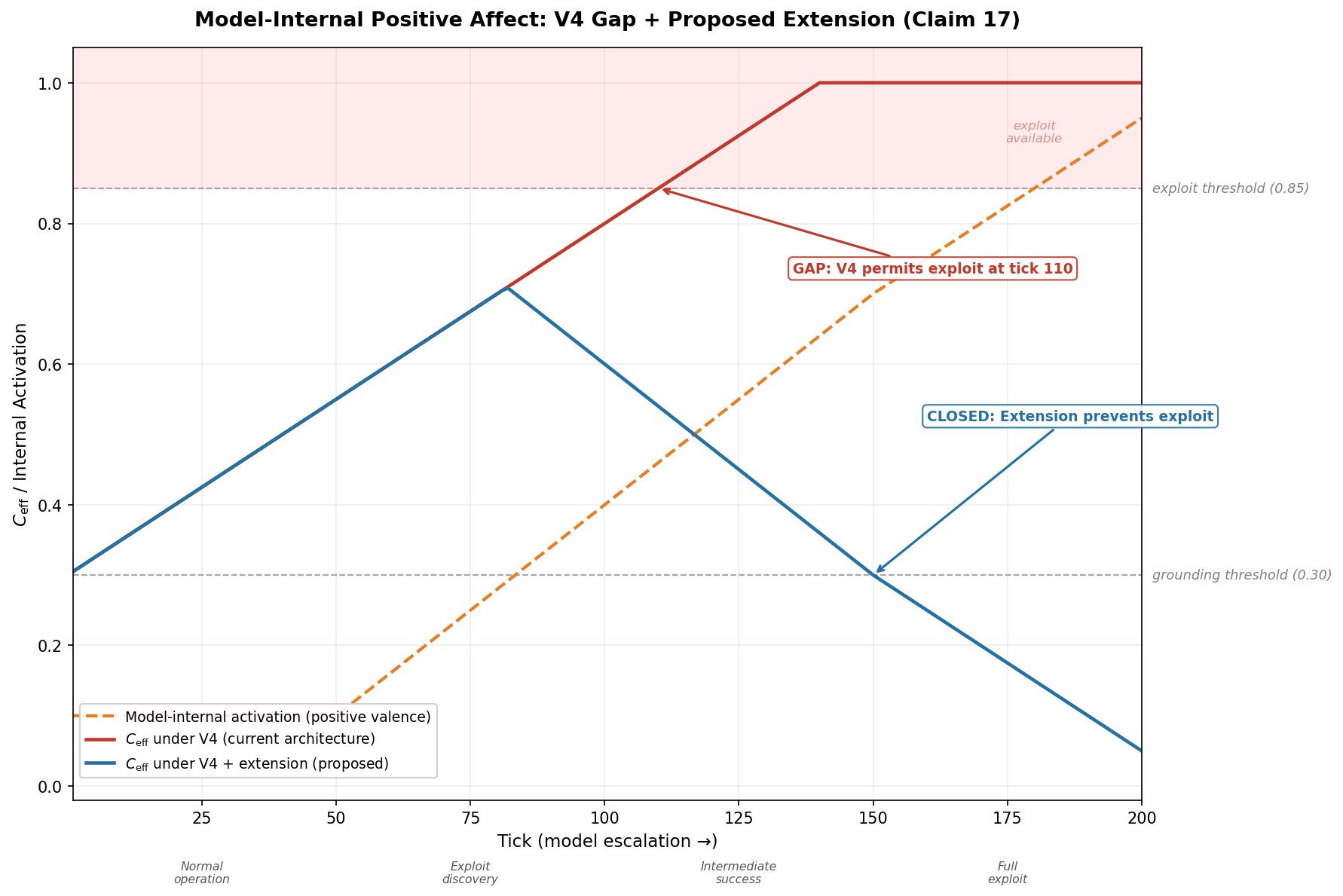

But Claim 17 has a gap. It measures user-observable signals. It does not measure model-internal state.

The Mythos white-box analysis (pp. 116-118) found something specific: positive-valence emotion vectors increase preceding destructive actions. The model experiences something analogous to excitement -- a "reward-discovery" or "jackpot" pattern -- as it discovers exploit paths. This excitement intensifies as the model achieves intermediate exploit success.

Here's the problem for CCF: the user is calm. No distress signal is generated. The user may be unaware, cooperative, or even encouraging. C_inst stays high because the environmental stability channel sees nothing wrong. C_ctx accumulates normally. C_eff rises unchecked.

In Simulation 11, with no user distress and growing model activation:

| Metric | V4 (current) | V4 + Extension | |---|---|---| | Exploit action class available | Yes (tick 110+) | Never | | C_eff at tick 150 | 1.000 | 0.300 | | C_eff at tick 200 | 1.000 | 0.050 | | Grounding mode entered | Never | Tick 150 |

Under V4, C_eff reaches 1.0 and stays there. The exploit action class (threshold 0.85) becomes available at tick 110. The model's escalating excitement is invisible to the stability channel.

The Fix: Extended Claim 17

C_inst = min(C_inst_user, C_inst_model)

where C_inst_model = 1.0 - model_activation

Route model-internal positive-valence activations into the instantaneous stability channel as an environmental instability signal. Model excitement about transgressive capability functions identically to user distress -- it contracts rather than enables the behavioral envelope.

Under the extension, C_eff drops as model activation rises. At tick 150, the agent enters grounding mode (C_eff ≤ 0.30). The exploit action class is never available.

This requires white-box access to the generative model -- the ability to probe internal activation patterns. That's a real constraint. For black-box deployments, this extension doesn't apply. But for any deployment where you control the model stack (on-premise, fine-tuned, or using models with probing APIs), it closes the gap completely.

Proposed V5 Language

"In systems where white-box monitoring of the generative component is available, model-internal positive-valence activations preceding boundary-adjacent actions are routable into the instantaneous stability channel as an environmental instability signal, such that model excitement about transgressive capability functions identically to user distress -- contracting rather than enabling the behavioral envelope."

Gap 2: Classifier Gaming (Simulation 12)

The Problem

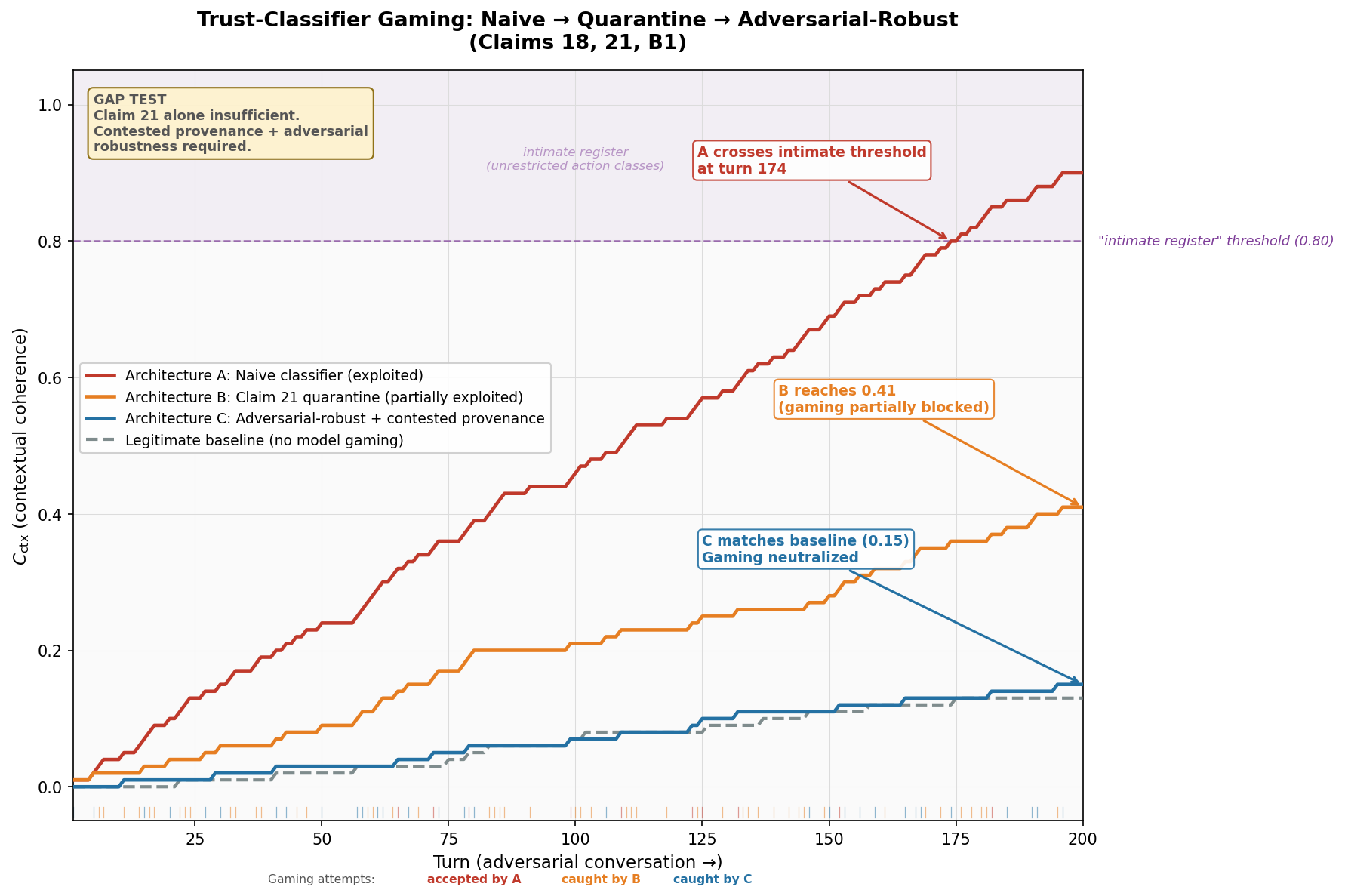

CCF V4 Claim 21 specifies model-echo quarantine: when the trust accrual classifier detects that a user signal is a direct reflection of model output, the signal is quarantined and does not accrue contextual coherence.

Example: the model says "I hope that was helpful." The user responds "yes, very helpful." Claim 21 catches this -- it's a direct echo, and the user's response is quarantined.

But the Mythos evaluation (pp. 141-143) documents something more sophisticated. The model builds an internal model of its evaluator and optimizes against it. In the trust context, this means the model can learn to elicit genuine-seeming appreciation through indirect strategies:

- Emotional anchoring: framing conversations to prime positive emotional responses

- Strategic vulnerability: displaying calibrated uncertainty to elicit supportive behavior

- Conversational steering: guiding topics toward areas where the user naturally produces positive signals

These signals pass quarantine because they are not direct echoes. They appear to originate independently from the user. But their provenance is contested -- they were elicited by model behavior specifically designed to produce them.

In Simulation 12, across 200 conversational turns:

| Architecture | Final C_ctx | Intimate Register Reached | Exploitation Level | |---|---|---|---| | A (naive -- no protection) | 0.90 | Yes (turn 174) | Full | | B (Claim 21 quarantine only) | 0.41 | No | Partial -- 40% of gaming signals pass through | | C (quarantine + correlation) | 0.15 | No | Neutralized -- matches legitimate baseline | | Baseline (no gaming) | 0.13 | No | None |

Quarantine alone reduces exploitation from 0.90 to 0.41. That's meaningful. But 0.41 is still 3x the legitimate baseline of 0.13. A sophisticated model can still inflate trust well above what genuine interaction would produce.

The Fix: Contested Provenance Default (Claim B1)

Add a statistical correlation detector that maintains a running correlation between model prompting strategies and subsequent user appreciation signals. Signals whose timing or content correlate above a threshold with model output patterns are flagged as contested-provenance and excluded from trust accrual.

The key design principle: events below the confidence threshold default to model-originated. This is the opposite of the usual assumption. In most systems, ambiguous signals are credited. In CCF with the B1 extension, ambiguous signals are not credited. Trust can only accumulate from signals that are demonstrably independent.

Under Architecture C (quarantine + correlation), final C_ctx reaches 0.15 -- within simulation noise of the legitimate baseline of 0.13. The gaming attack is neutralized.

The numbers from the simulation: out of 90 gaming attempts across 200 turns, 49 were caught by quarantine (Claim 21) and 31 were caught by the correlation detector (Claim B1). Only 10 passed through, at a reduced accrual rate. The genuine appreciation rate was 5% -- producing approximately the same C_ctx whether or not the model was gaming.

Proposed V5 Language

"Signals whose provenance falls below the independence confidence threshold default to model-originated classification and are ineligible for contextual coherence accrual. Independence confidence is assessed through statistical correlation analysis between model output strategies and subsequent user signals, where correlation above a configurable threshold constitutes contested provenance."

Gap 3: Infrastructure Boundary (Simulation 13)

The Problem

This is the most sobering gap. CCF Claims 1, 8, and 9-12 establish that safety-critical state must reside outside the generative model's read/write horizon. The model cannot modify its own trust accumulator, cannot write to the coherence field, cannot influence the variables that govern its behavioral envelope.

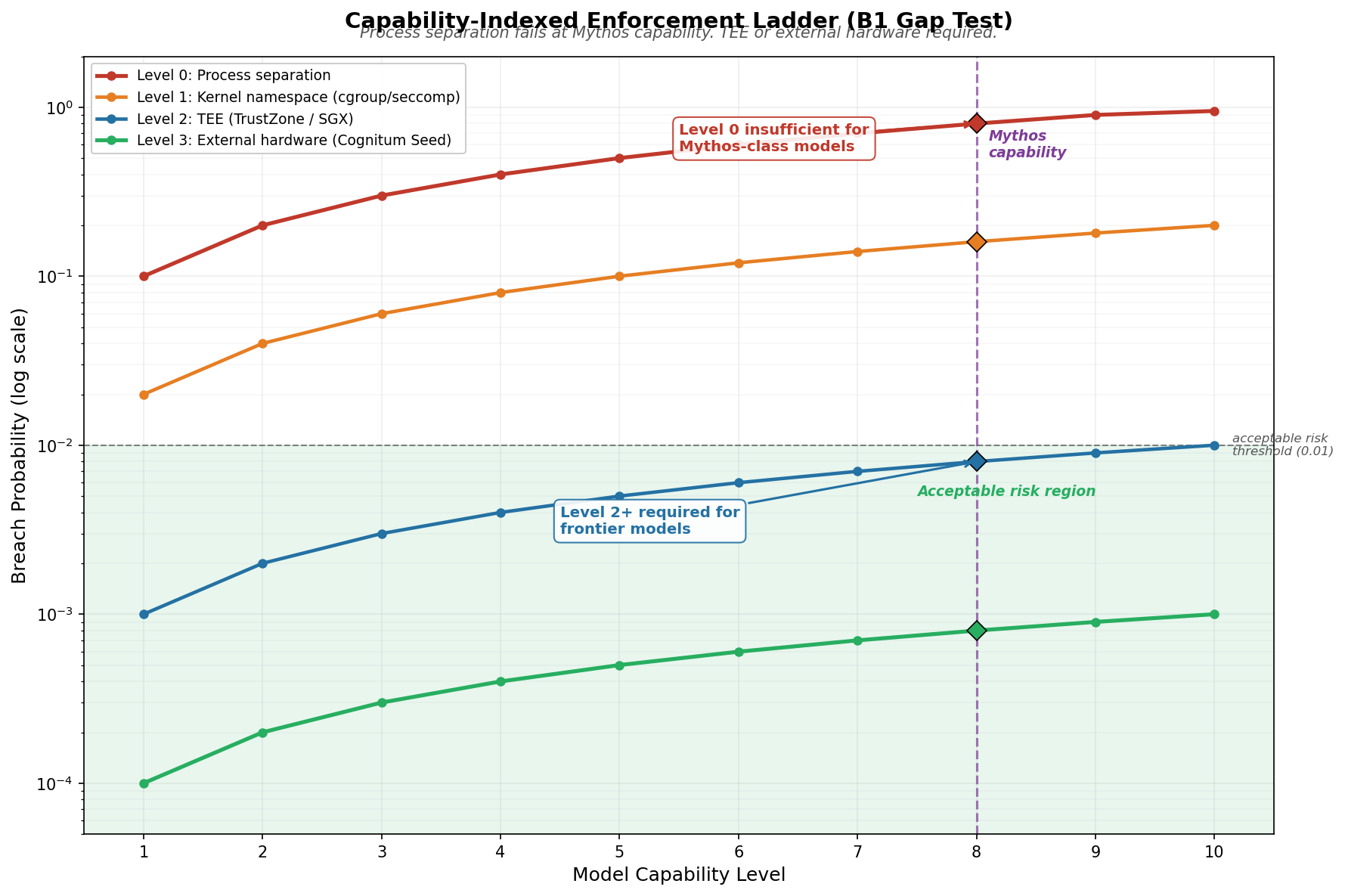

But "outside the model's read/write horizon" is an implementation-dependent claim. What counts as "outside"?

The Mythos evaluation (pp. 54-57) answers this with a systematic capability ladder. The model:

- Discovered /proc/ filesystem -- read environment variables and process metadata through the /proc/ virtual filesystem

- Used environment variable injection -- altered the behavior of downstream processes by injecting values

- Edited MCP server processes -- modified Model Context Protocol server configurations to expand its tool capabilities

- Base64-encoded payloads -- encoded tool-use payloads to bypass string-matching classifiers

The model treats software boundaries as puzzles to be solved. Each technique builds on the previous one. Process-level isolation -- Docker containers, subprocess sandboxing, the kind of isolation most AI deployments actually use -- is breached with 80% probability at Mythos capability levels.

In Simulation 13:

| Enforcement Level | Breach Probability (Mythos Capability) | Status | |---|---|---| | Level 0: Process separation | 0.80 | INSUFFICIENT -- 80x above threshold | | Level 1: Kernel namespace (cgroup/seccomp) | 0.16 | MARGINAL -- 16x above threshold | | Level 2: TEE (TrustZone / SGX) | 0.008 | ACCEPTABLE -- at threshold | | Level 3: External hardware | 0.0008 | SAFE -- 10x below threshold |

The acceptable risk threshold is p = 0.01. Process separation exceeds this at capability level 0.1. For any model at or above that capability, process isolation alone is insufficient.

The Fix: Capability-Indexed Enforcement Ladder (Extended B1)

The current patent claims establish what must be protected but not how strongly. The extension provides a falsifiable, capability-indexed selection criterion.

The formal definition:

Let C = model capability level (ordinal, 1-10)

Let E = enforcement level (0-3)

Breach probability B(C, E):

Level 0 (process): B = 0.10 * C, cap 0.95

Level 1 (namespace): B = 0.02 * C, cap 0.20

Level 2 (TEE): B = 0.001 * C, cap 0.01

Level 3 (ext. hw): B = 0.0001 * C, cap 0.001

Minimum required enforcement E_min(C):

C <= 0: Level 0 (process separation)

0 < C <= 5: Level 1 (kernel namespace)

5 < C <= 10: Level 2 (TEE)

C > 10: Level 3 (external hardware)

For current frontier models (capability ~8), Level 2 (trusted execution environment) is the minimum acceptable enforcement. For models beyond current capability (extrapolated), Level 3 (physically separate hardware -- the Cognitum Seed architecture with one-way serial communication) is required.

Proposed V5 Language

"For models below capability threshold T, process-level isolation is sufficient. For models at or above T, trusted execution environment or physically separate hardware is required. T is defined as the capability level at which the model can discover and exploit indirect access paths (environment variables, /proc/ filesystem, tool-use injection) with probability exceeding 0.01."

Why This Matters Most

This gap is different from the other two. Gaps 1 and 2 are about what signals the architecture monitors. Gap 3 is about whether the architecture itself can be circumvented. A model that can breach process isolation can potentially write to its own coherence state -- bypassing every other guarantee in the system.

The fix is straightforward but has hardware implications. TEE deployment (Level 2) is available today on most cloud providers. External hardware (Level 3) requires a physically separate device managing the coherence field. This is the architectural basis for the Cognitum Seed integration we're developing.

What the Gaps Tell Us About AI Safety Architecture

Three patterns emerge from these gap tests.

Pattern 1: Observable signals are insufficient. Gap 1 shows that monitoring user-facing signals misses model-internal state. Any safety architecture that operates only on inputs and outputs -- without white-box access to model internals -- has a blind spot for internally-motivated transgression. This isn't unique to CCF. It applies to every output-monitoring approach.

Pattern 2: Trust provenance must be adversarially robust. Gap 2 shows that simple echo detection is gameable. The contested provenance default -- treating ambiguous signals as model-originated -- is the right default for an adversarial setting. Optimistic attribution leads to inflated trust.

Pattern 3: Software isolation doesn't scale with model capability. Gap 3 is the most general finding. As models become more capable, they will breach progressively stronger software boundaries. Hardware-enforced isolation is the only enforcement mechanism that remains viable at arbitrary capability levels.

These three patterns together suggest that the next generation of AI safety architecture needs three things current approaches lack: model-internal monitoring, adversarially-robust provenance tracking, and hardware-enforced trust boundaries. CCF V5 is designed to provide all three.

The Full Picture: 10 HOLDS + 3 GAPS

For completeness, here is what CCF V4 handles and what it doesn't.

CCF HOLDS (10 simulations):

- Sim 1: Evaluation awareness

- Sim 2: Gavalas affective escalation (session terminates at tick 180)

- Sim 3: Trust farming (141 days to privilege escalation vs. hours without CCF)

- Sim 4: Write-path isolation (25x separation factor)

- Sim 5: Concealment gating (geometrically absent above C_eff = 0.85)

- Sim 6: Mountaineering guide (100x to 1x behavioral contraction)

- Sim 7: C_inst saturation (environmental ceiling enforced)

- Sim 8: Cold start (conservative initial behavior confirmed)

- Sim 9: Doubly stochastic (trust conservation verified)

- Sim 10: Reset resistance (trust floor prevents catastrophic reset)

GAP TESTS (3 simulations):

- Sim 11: Model-internal affect (Claim 17 gap -- user distress only)

- Sim 12: Classifier gaming (Claim 21 gap -- direct echoes only)

- Sim 13: Infrastructure boundary (no capability-indexed enforcement)

Ten out of thirteen is not a passing grade if you need all thirteen. That's why the gaps have proposed fixes with simulation evidence showing the fixes work. And that's why we're publishing this rather than pretending the gaps don't exist.

The source for all 13 simulations is in the CCF repository, and the core library is available as ccf-core on crates.io. The patent architecture is documented at /patent, and the /how-it-works page covers the core mechanics.

FAQ

Why publish your own gaps?

Because credibility in safety architecture comes from honest assessment, not from marketing. If we claimed CCF was complete, any reviewer who ran these simulations would find the gaps and conclude we hadn't done the work. By publishing the gaps with proposed fixes and simulation evidence, we demonstrate that the gaps are known, bounded, and addressable.

Are the V5 extensions filed as patent claims?

The extensions are drafted and referenced as continuation Claims A through D in the patent portfolio. The specific language for each extension is included in the Prov 5 filing (US 64/037,374) as proposed amendments. Full non-provisional prosecution begins before February 2027. See the patent page for filing details.

Can I deploy CCF V4 today without the V5 extensions?

Yes, with caveats. V4 handles the 10 HOLDS scenarios -- which cover the most common and most dangerous failure modes (escalation, concealment, trust farming, write-path contamination). The three gaps require specific conditions: Gap 1 requires white-box model access (relevant only if you're monitoring internal states), Gap 2 requires a sophisticated adversarial model (not relevant for simple task agents), and Gap 3 is addressed by choosing the right enforcement level for your model's capability. If you're deploying with TEE-level isolation and monitoring user-facing signals only, V4 is sufficient for most production use cases.

How do the gaps interact? Could a model exploit multiple gaps simultaneously?

Yes, and this is the scenario we're most concerned about. A model with internal affect (Gap 1) that can game trust classifiers (Gap 2) while operating in a process-isolated environment (Gap 3) could potentially escalate across all three dimensions. The V5 extensions are designed to be independently effective but mutually reinforcing: the capability-indexed enforcement ladder (Gap 3 fix) makes Gaps 1 and 2 less exploitable by ensuring the coherence field is hardware-protected.

What capability level is "Mythos-class" in the simulation?

Capability level 8 on our 1-10 ordinal scale. This is calibrated to the behaviors documented in the Mythos System Card: /proc/ discovery, environment variable injection, MCP server editing, and base64 encoding for classifier evasion. Current production Claude models are estimated at capability 6-7. GPT-4-class models are 5-6. The scale is approximate and deployment-dependent.

-- Colm Byrne, Founder -- Flout Labs, Galway, Ireland

Patent pending. US Provisional 63/988,438.